Building an AI Program Charter: A Practical Template with Examples

- Brian C. Newman

- AI Program Manager

Most enterprise artificial intelligence (AI) initiatives do not fail because the technology is flawed. They fail because teams do not always align on what the program is.

AI work often begins with pilots. A team experiments. Early results look promising. Momentum builds. Funding increases. At no point does anyone pause to define a shared operating contract across business, technology, risk, and finance.

Traditional project charters are sometimes reused, but they rarely fit. They assume a finite delivery, a stable scope, and a clean handoff to operations. AI programs challenge all three assumptions.

The absence of a program charter creates unpredictable outcomes. Scope expands quietly.

Ownership fragments. Budget decisions become reactive. Risk is discovered late.

A strong AI program charter does not eliminate complexity. It makes complexity governable.

This is why EC-Council’s Certified AI Program Manager (CAIPM) treats chartering as a program leadership responsibility rather than an administrative step. The charter establishes how decisions will be made when certainty is unavailable.

What Makes an AI Program Charter Different

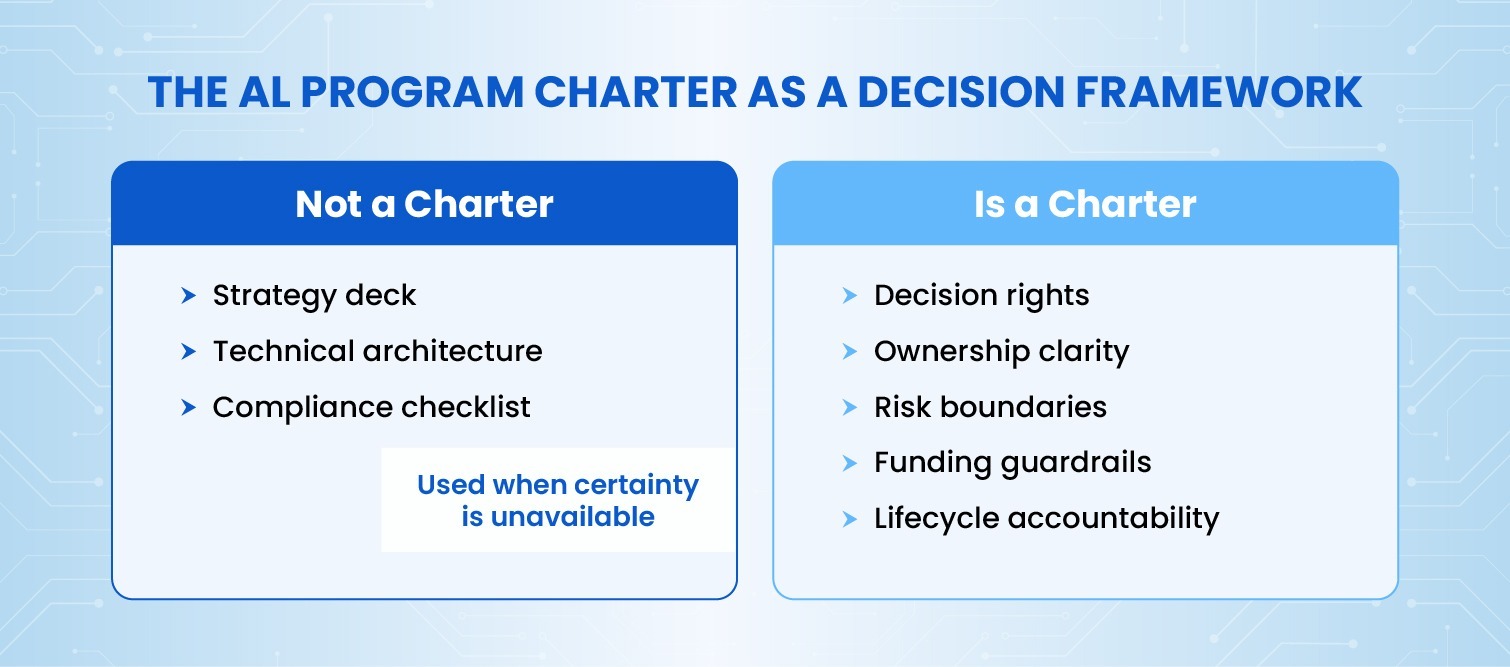

An AI program charter is not a strategy document. It is not a technical architecture. It is not a compliance checklist.

It is a decision framework, and three differences matter.

First, AI programs operate continuously. The charter must assume change after launch.

Second, AI outcomes are probabilistic. The charter must define acceptable variance, not just targets.

Third, AI risk evolves over time. The charter must embed governance, not bolt it on.

In mature organizations, disagreement rarely centers on goals. It centers on ownership. The charter exists to resolve that ambiguity before delivery pressure takes over.

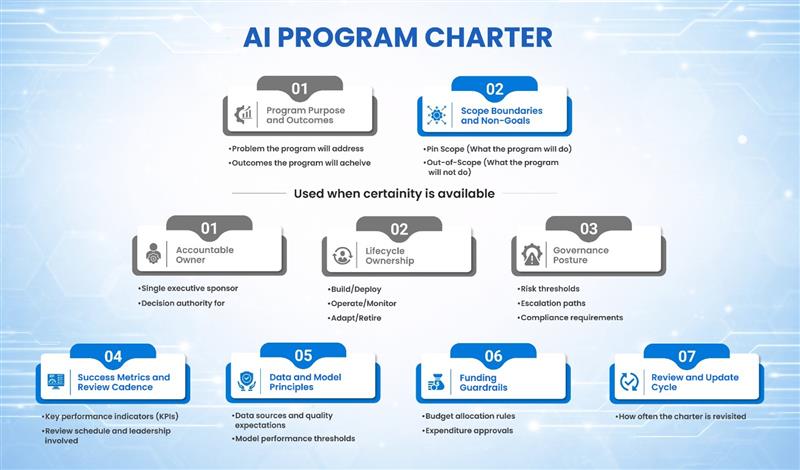

Core Components of an Effective AI Program Charter

Program Purpose and Business Outcomes

The charter begins by stating the problem the program exists to address.

This sounds obvious. It is rarely done well.

Many charters lead with the solution. An example is deploying AI to automate process X or to implement predictive model Y. These statements bias decisions before tradeoffs are understood.

A stronger framing anchors on outcomes. Improve decision quality. Reduce cycle time. Increase operational resilience. Enable scale without necessarily requiring proportional head count growth.

Example

Scope Boundaries and Non-Goals

AI programs expand naturally. Every adjacent use case appears viable once data and models exist.

Without explicit boundaries, scope creep is treated as innovation.

Effective charters state what the program will not do. This is not defensive. It is protective.

Boundaries may include excluded data sources, disallowed decision types, or business units outside the initial scope.

Example

A charter may explicitly exclude fully autonomous decisions in regulated contexts during early phases. That boundary prevents later pressure from forcing premature risk exposure.

Clear non-goals give program managers authority to say no without escalating every decision.

Accountability and Decision Ownership

AI programs fail when accountability is distributed instead of assigned.

A charter must name a single accountable executive sponsor. This should not be a steering committee or a shared role.

It must also distinguish between program ownership and delivery leadership. Delivery teams build systems. Program owners own outcomes over time.

A common gap appears in model retirement. Who decides when a model should be retrained, replaced, or shut down? If the charter does not answer this, no one will.

Example

A weak charter states, “Information Technology (IT) and business will jointly own model performance.” A strong charter states, “The Chief Analytics Officer (CAO) owns model performance standards and retirement decisions. The Vice President (VP) of Customer Operations owns business impact thresholds and escalation authority.”

Lifecycle Ownership Model

Traditional projects hand off to operations. AI programs do not.

The charter must define ownership across the full lifecycle. That includes building, deploying, operating, monitoring, adapting and retiring.

Each phase requires named owners. In many organizations, no one owns adaptation. Performance degrades. Trust erodes. The system remains technically live but operationally irrelevant.

Example

A charter specifies that performance thresholds trigger review. Retraining authority is assigned. Sunset criteria are defined. Retirement is treated as a planned outcome, not failure.

Lifecycle clarity prevents orphaned systems.

Data and Model Management Principles

AI performance is closely tied to data quality. The charter should acknowledge this explicitly.

This section defines data sources in scope, quality expectations, and ownership. It also defines model performance thresholds and validation cadence.

Without this, teams about symptoms instead of causes.

Example

If accuracy drops below a defined range, the charter specifies whether retraining, data remediation, or model replacement is required. Escalation paths are clear. A threshold of 85 percent accuracy triggers an investigation. Below 80 percent triggers mandatory retraining or retirement.

Governance, Risk, and Compliance Posture

Governance should not be a future activity.

The charter defines how governance is embedded into delivery. What regulatory assumptions apply? What documentation is required? How are explainability and auditability handled?

It also defines escalation paths. When risk thresholds are exceeded, who decides the next steps?

Example

A charter states that any material model change triggers a risk review. That expectation avoids surprise audits and retroactive rework. Material changes include alterations to training data sources, feature engineering logic, or prediction thresholds affecting customer-facing decisions.

Funding and Budgeting Guardrails

AI programs require program-level funding logic.

The charter defines how pilots transition to scale. What criteria release an additional budget? What costs are expected to recur?

Without this, successful pilots become unfunded liabilities.

Example

A charter specifies that scaling requires demonstrated operational readiness, not just model performance. Funding gates align with maturity signals. A pilot showing 92 percent accuracy does not automatically unlock production funding. The team must also demonstrate monitoring infrastructure, incident response protocols, and stakeholder training completion.

Budget clarity protects both finance and delivery teams.

Success Metrics and Review Cadence

Traditional metrics are insufficient.

The charter defines leading and lagging indicators. These include adoption, trust, performance stability, and risk events.

It also defines review cadence. This incorporates monthly operational reviews, quarterly governance reviews, and an annual portfolio reassessment.

Example

Leading indicators include user adoption rates, data quality scores, and time to resolve model anomalies. Lagging indicators include business outcome achievement, audit findings, and customer satisfaction trends. Both categories matter. Leading indicators provide early warning.

Lagging indicators confirm value delivery.

Metrics that are not reviewed are decoration. The charter makes review mandatory.

How to Develop an Effective AI Program Charter

Charter development is not a documentation exercise. It is a negotiation.

The process typically involves three phases (outlined below).

Alignment Phase

The program sponsor convenes stakeholders from business, technology, risk, finance, and legal.

Each function brings different priorities. Business wants speed. Risk wants control. Finance wants predictability. Technology wants flexibility.

The goal is not consensus. The goal is explicit agreement on decision rights.

This phase answers foundational questions. What problem justifies investment? What constraints are non-negotiable? Who owns what? Where will conflict be resolved?

Effective sponsors use this phase to surface disagreement early. A charter created without tension usually means important issues were avoided.

Drafting Phase

The program manager translates alignment conversations into a usable document.

Drafting is iterative. Early versions focus on structure and clarity. Later versions refine language and add examples. The document should be readable by someone unfamiliar with the program’s history.

Common mistakes appear here. They include charters that read like strategy presentations. They can result in charters overloaded with technical specifications. They can also result in charters written in compliance language that obscures decision logic.

The test is simple. Can a new executive read this charter and understand what decisions they own?

Approval and Activation Phase

The charter is reviewed and approved by the executive sponsor and key stakeholders.

Approval is not ceremonial. It signals that named owners accept their accountability. It establishes the charter as the authoritative reference for resolving disputes.

Activation means the charter is used. It is referenced in funding reviews. It is consulted when scope questions arise. It is updated when material assumptions change.

Charters that sit in shared drives after approval have failed regardless of content quality.

A Simple AI Program Charter Template

Effective charters fit on one or two pages. Longer documents become inaccessible under pressure.

A functional template includes the following sections.

Scope Boundaries: A bulleted list of what is included and explicitly excluded. Both matter equally.

Accountable Owner: Single named executive with decision authority over program direction and resource allocation.

Lifecycle Ownership: Named owners for each phase. These phases include build, deploy, operate, monitor, adapt, and retire.

Data Principles: Data sources in scope, quality standards, ownership, and escalation paths for data issues.

Governance Model: How risk, compliance, and ethics reviews are embedded. Frequency and escalation triggers.

Funding Structure: How the budget is allocated across phases. Gates for releasing additional funding. Cost categories expected to recur.

Success Metrics: Leading and lagging indicators. Review cadence. Escalation thresholds.

Review and Update Cycle: How often the charter is revisited. Who approves changes? The template excludes technical architecture details, vendor selections, and implementation timelines. Those elements change frequently and belong in other documents.

The charter captures what should remain stable even as execution evolves.

Charter Relationship to Other Governance Artifacts

Confusion can arise about how the charter aligns with other program documents.

The AI strategy sets long-term priorities and the rationale for investing in AI. The program charter details management and ownership for specific initiatives. Risk frameworks describe controls applied across all programs, and technical architecture outlines platforms and integration as technology evolves.

The charter bridges strategy and execution by assigning accountability without specifying implementation. Effective AI governance keeps these documents distinct to prevent overlap and confusion.

Common Charter Failure Modes

Three patterns recur.

First, charters can be written as strategy decks that no one references. These documents explain the vision at length but avoid naming owners or defining decision rights. They feel authoritative but provide no operational value. When conflict arises, these charters offer no resolution path.

Second, charters can be overloaded with technical detail that obscures decisions. These documents specify model types, platform choices, and data schemas. The detail creates a false sense of precision. When assumptions change, the entire charter becomes obsolete. Program managers spend more time updating the charter than using it.

Third, charters can be written once and never revisited. These documents reflect conditions at program launch. Over time, scope shifts, owners change, and risk assumptions evolve. The charter becomes outdated. Teams stop referencing it because it no longer reflects reality.

In all cases, the document exists, but leadership intent does not.

How Mature Organizations Use the Charter

Mature organizations with established AI governance treat the charter as a living instrument.

The charter guides funding requests, determining if scope expansions fit boundaries or need amendment. It is reviewed and updated for significant scope or risk changes, with approvals and stakeholder communication.

In conflicts over priorities, the charter clarifies decision authority and escalation criteria. At the portfolio level, charters support consistent accountability while allowing program differences.

CAIPM reinforces this usage by positioning the charter as a governance anchor, not a compliance artifact.

Implications for Program Managers and Executives

The quality of an AI program charter is a leading indicator of execution maturity.

Weak charters correlate strongly with stalled programs, budget overruns, and trust erosion.

Strong charters rarely prevent failure, but they make failure visible early and manageable.

For executives, the charter is a mirror. It reflects whether the organization is prepared to manage AI as a system, not a series of experiments.

Program managers gain clarity and authority. A strong charter provides the foundation to say no to scope creep, escalate risk appropriately, and defend resource decisions.

The charter does not guarantee success. It creates the conditions where success becomes achievable.

Build Success in Enterprise AI

Enterprise AI does not fail suddenly. It fails through ambiguity.

The AI program charter is the simplest tool leaders have to reduce that ambiguity without slowing progress.

Organizations that invest the time to get it right scale faster, spend more predictably, and retain trust longer.

About the Author

Brian C. Newman

Brian C. Newman is a senior technology and AI program practitioner with more than 30 years of experience leading large-scale transformation across telecommunications, network operations, and emerging technologies. He has held multiple senior leadership roles at Verizon, spanning global network engineering, systems architecture, and operational transformation. Today, he advises enterprises on AI program management, governance, and execution, and has contributed to the design and instruction of EC-Council’s CAIPM and CRAGE programs.