MLOps for Program Managers: A Non-Technical Field Guide

- Imran Afzal

- AI Program Manager

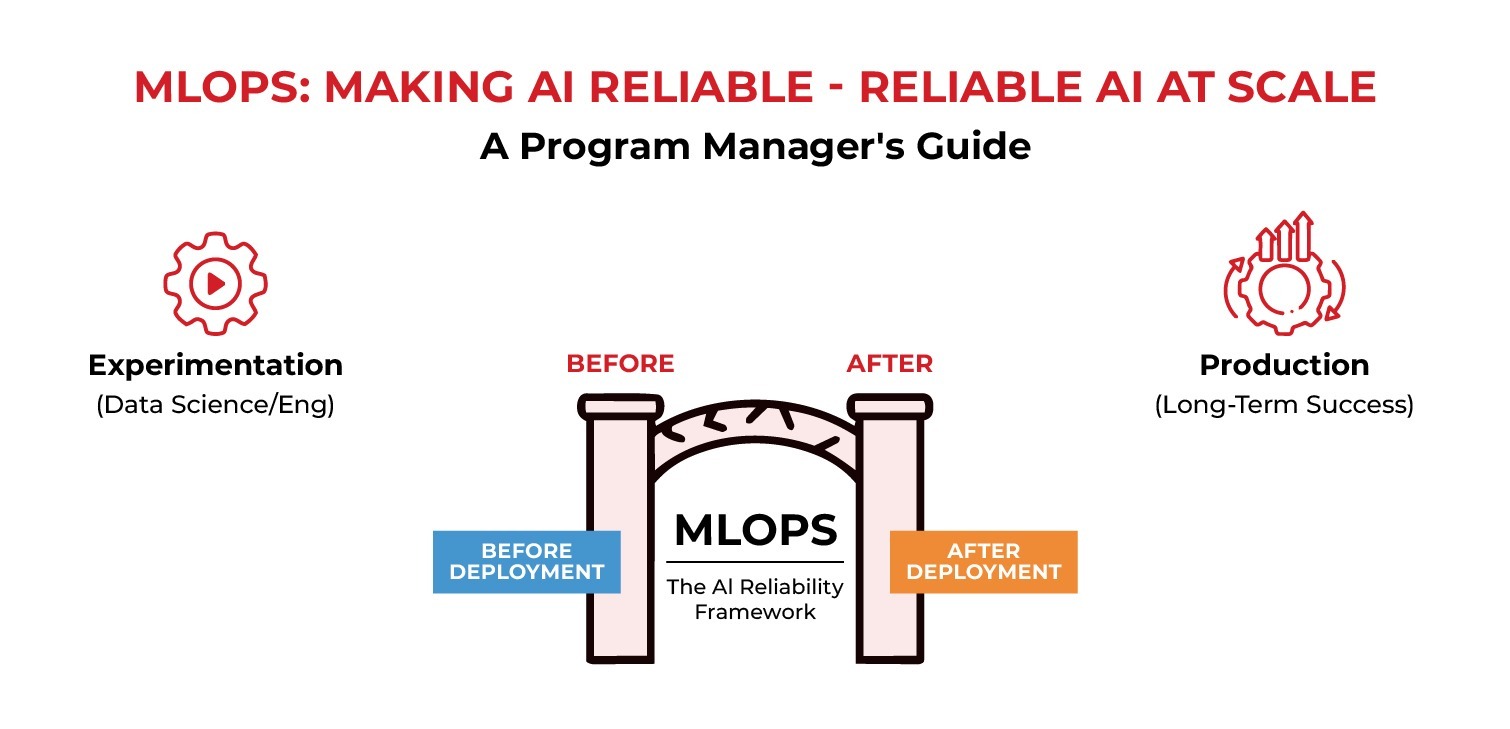

As artificial intelligence (AI) initiatives move from experimentation into production, many program managers find themselves repeatedly hearing the term “MLOps” without receiving a clear or practical explanation of what it means. In meetings, MLOps is often discussed as a technical capability owned by data science or engineering teams. However, this framing creates confusion and distance for program managers who are responsible for delivery, risk, and long-term outcomes.

The core aim of MLOps is to ensure AI systems can be deployed, monitored, and maintained reliably over time by addressing what happens after a model has been trained and tested. Compared to the experimentation stage, which focuses on whether a model can work, MLOps focuses on whether the model can continue to work in real operating conditions. This distinction is critical because many AI initiatives fail not during development, but after deployment, when performance degrades, or accountability becomes unclear.

For program managers, MLOps is not about algorithms, model architecture, or feature engineering. It is about the process, ownership, and operational discipline. MLOps defines how models are versioned, how changes to data are tracked, and how performance is measured once the system is live. Without these practices, even a high-performing model can quickly lose reliability and stakeholder trust.

MLOps: Making AI Reliable at Scale

Program managers must understand that AI systems are not static. Unlike traditional software, AI models depend on data that changes over time. Customer behavior shifts, business rules evolve, and external conditions fluctuate. When these changes occur, model performance can silently decline. MLOps provides the structure needed to detect these changes early and respond before they impact business.

A common misconception is that MLOps is optional or only relevant for large organizations. In reality, MLOps benefits any AI system that affects decisions, customers, or operations. Without it, teams are often forced into reactive firefighting mode, responding to issues only after problems have surfaced. For program managers, this creates risk exposure and undermines confidence with leadership.

Effective MLOps also clarifies accountability. It answers practical questions such as who owns the model after deployment, who monitors performance, and who approves changes. Without clear ownership, issues such as data drift, unexpected outputs, or rising operational costs can persist unresolved. Program managers play a central role in ensuring these responsibilities are defined and communicated across teams.

When viewed through this lens, MLOps becomes less of a technical discipline and more of a governance and delivery framework. It helps program managers keep AI initiatives stable, auditable, and aligned with business goals long after the initial deployment. Understanding this perspective is the foundation for managing AI responsibly and successfully at scale.

Understanding MLOps Through a Program Management Lens

For program managers, MLOps is best understood as a delivery framework rather than a development one. While data scientists focus on building and improving models, program managers are responsible for ensuring that AI systems operate reliably, safely, and predictably once they are in use. MLOps provides the structure that makes this possible.

One of the core components of MLOps is model lifecycle management. This includes tracking which model version is currently deployed, understanding how it was trained, and knowing what data was used. From a program management perspective, this is about traceability and accountability. When a model produces unexpected results, teams must quickly determine whether the issue relates to data changes, configuration updates, or a new model release. Without proper versioning and documentation, diagnosing problems becomes slow and disruptive.

Data monitoring is another critical element. AI models are highly dependent on the data they consume, and that data rarely stays constant. Customer behavior evolves, market conditions shift, and upstream systems change. MLOps practices allow teams to monitor incoming data and detect patterns that differ from what the model was originally trained on. For program managers, this capability is essential because silent data changes can gradually erode performance without triggering obvious failures.

Performance monitoring extends beyond accuracy metrics. While technical teams may focus on precision or recall, program managers should also care about operational and business-level indicators. These include response times, system availability, cost of execution, and downstream business impact. MLOps frameworks bring these metrics together, allowing teams to see how technical performance translates into real-world outcomes.

Another important aspect of MLOps is change management. AI systems evolve through retraining, parameter updates, or infrastructure changes. Program managers must ensure that these changes follow a controlled process. This includes defining approval workflows, testing requirements, and rollback procedures. Without this discipline, updates can introduce new risks or break downstream dependencies.

Collaboration across teams is central to effective MLOps. Data scientists, engineers, operations teams, security teams, and business stakeholders all contribute to the success of an AI system. Program managers help coordinate these groups by establishing shared timelines, defining responsibilities, and aligning expectations. MLOps provides the common framework that enables this coordination.

Ultimately, these MLOps practices give program managers the visibility and control needed to effectively guide AI initiatives toward sustainable success. It transforms AI from an experimental effort into a managed operational system.

MLOps as a Governance and Long-Term Value Framework

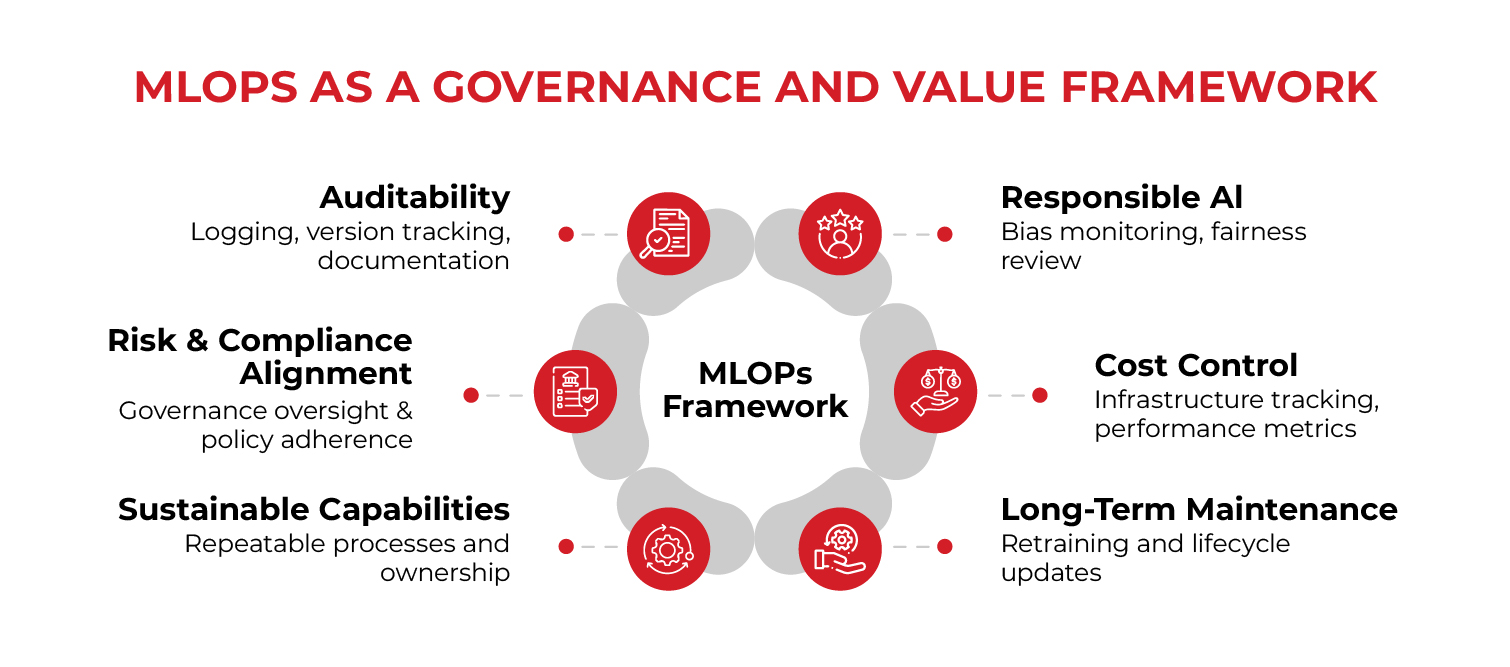

As AI systems mature and become embedded in core business processes, the role of MLOps extends beyond operational reliability into governance and long-term value management. For program managers, this is where MLOps becomes especially important. It provides the structure needed to ensure AI systems remain aligned with business objectives, ethical standards, and organizational risk tolerance over time.

One of the key governance benefits of MLOps is auditability. AI-driven decisions often need to be explained to internal stakeholders, regulators, or customers. MLOps practices such as logging, version tracking, and documentation create a clear record of how models were trained, deployed, and updated. For program managers, this visibility reduces uncertainty and makes it easier to respond to questions or investigations without disrupting operations.

MLOps also supports responsible AI practices. As concerns around bias, fairness, and transparency increase, organizations need mechanisms to monitor how models behave in real-world conditions. Program managers can use MLOps processes to ensure that performance reviews include not only technical accuracy but also ethical and compliance considerations. This proactive approach helps organizations identify issues early and take corrective action before trust is damaged.

Cost control is another area where MLOps adds value. AI systems can become expensive to operate if usage grows faster than expected or if infrastructure is not optimized. MLOps enables teams to track operational costs alongside performance metrics, allowing program managers to make informed decisions about scaling, optimization, or prioritization. This visibility helps prevent AI initiatives from becoming open-ended investments without clear returns.

Long-term maintenance is often overlooked during early development stages. Models require retraining, data pipelines evolve, and business requirements change. MLOps establishes repeatable processes for managing these changes without introducing instability. For program managers, this means fewer surprises and a clearer path for continuous improvement.

Perhaps most importantly, MLOps helps shift AI initiatives from isolated projects to sustainable capabilities. When AI systems are managed through consistent processes, clear ownership structures, and measurable outcomes, they become part of the organization’s operational fabric. Program managers can then focus on scaling value rather than constantly resolving issues.

In this way, MLOps serves as both a delivery and governance framework. It enables program managers to maintain control, manage risk, and demonstrate value long after initial deployment. By understanding MLOps through this non-technical lens, program managers are better equipped to guide AI initiatives toward stability, accountability, and long-term success.

Practical MLOps Pitfalls Program Managers Should Anticipate

Even well-planned AI initiatives can struggle if common MLOps pitfalls are not anticipated early. One frequent issue is treating deployment as the finish line rather than the beginning of an operational lifecycle. When models are deployed without defined monitoring, ownership, or maintenance plans, performance issues often surface slowly and go unnoticed until business impact becomes visible.

Another common challenge is unclear responsibility across teams. Data scientists may assume that engineering teams own the system after deployment, while engineering teams expect data scientists to monitor model behavior. Program managers must actively prevent these gaps by clearly defining ownership for model performance, data pipelines, monitoring alerts, and change approvals. Without this clarity, issues linger unresolved and confidence erodes.

Inconsistent environments also create risk. Models that perform well in development may behave differently in production due to differences in data sources, infrastructure, or configuration. MLOps practices help standardize environments and reduce surprises, but only if they are enforced consistently across teams.

Finally, organizations often underestimate the effort required to maintain AI systems over time. Data changes, business priorities shift, and models require retraining. By anticipating these realities and embedding MLOps discipline early, program managers can avoid reactive firefighting and guide AI initiatives toward stable, long-term success.

About the Author

Imran Afzal

Imran Afzal, CEO of UTCLI Solutions and a best-selling IT instructor, has trained over a million students worldwide in IT, systems administration, and career development. An educator, mentor, and entrepreneur, he brings over 25 years of experience in systems engineering, leadership, and training across Fortune 500 companies in finance, fashion, and tech media.

His IT journey began in 2001 at Time Warner, NYC, and has since included leading major projects like data center migrations, VMware deployments, monitoring tool implementations, and Amazon cloud migrations. Imran holds a degree in Computer Information Systems from Baruch College (CUNY) and an MBA from NYIT.

Certified in Linux System Administration, VMware, UNIX, and Windows Server, Imran has been training students since 2010 through top-rated online courses and on-site programs. His mentorship has helped thousands secure IT jobs.

Beyond IT, Imran is dedicated to education and community service, founding a nonprofit school for children (pre-K to 10th grade).