Why AI Spend Balloons Without Delivering Enterprise Value

Enterprise AI budgets rarely fail because leaders refuse to invest. They fail because money is allocated using mental models that no longer fit the work being done.

AI initiatives often begin with optimism. That includes small teams, modest pilots, and limited risk. The expectation is that value will prove itself quickly, and scale will follow naturally. What happens is slower, messier, and far more expensive. Costs accumulate quietly across data work, integration, operations, and risk management. By the time executives notice, budgets are already strained, and trust is eroding.

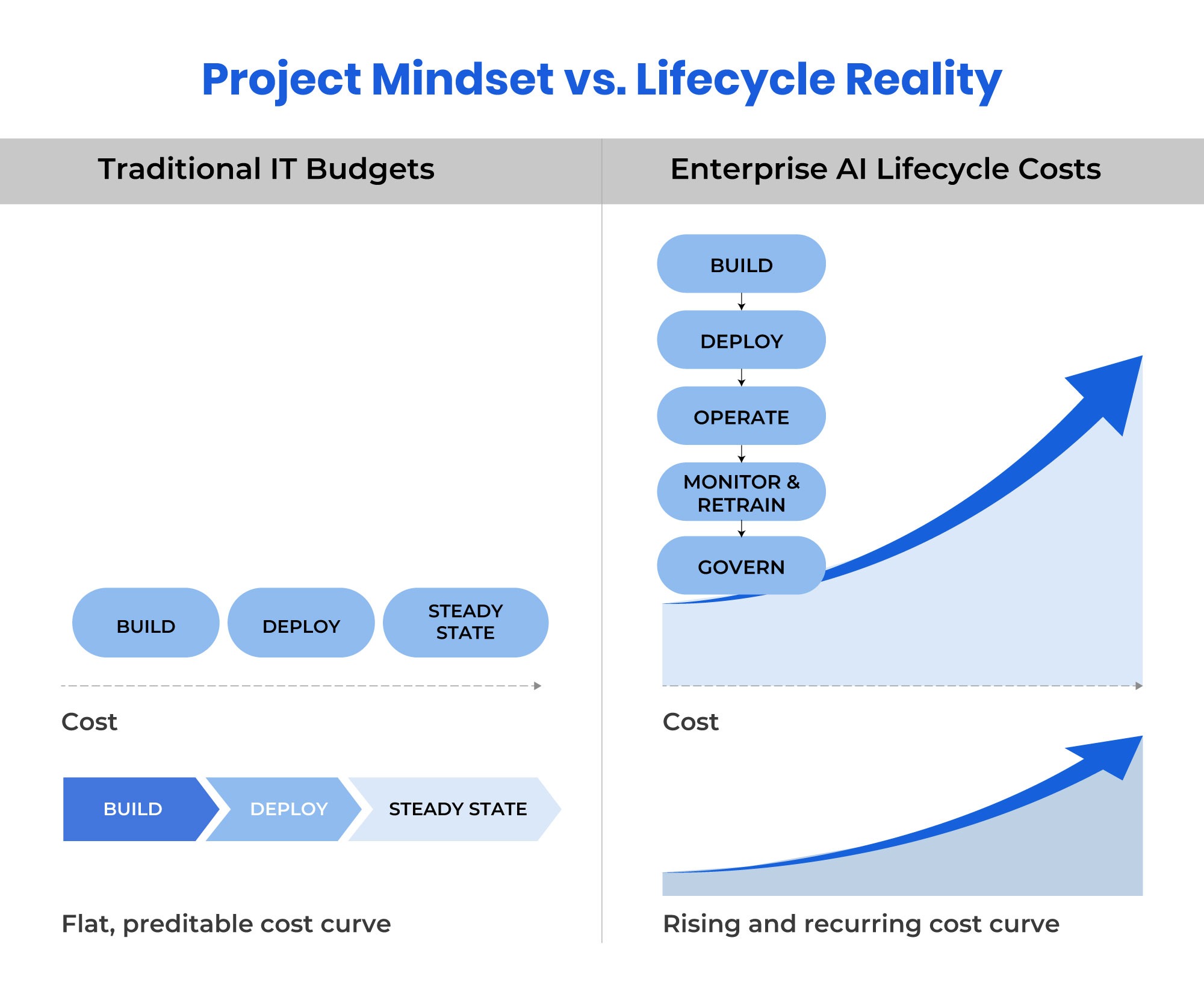

Compared with many software projects that can remain stable for longer periods, AI systems do not always move cleanly from build to deploy to steady state. AI systems operate across a lifecycle. Models can drift. Data can degrade. Regulations can change. Skills shortages can persist. Budgeting that treats AI like a contained project almost guarantees overruns.

The result is a familiar executive pattern. AI spend increases year over year. Tangible enterprise impact remains hard to explain.

Why Traditional Budgeting Models Breakdown for AI

Most organizations still fund AI as if it were software delivery. A business case is approved. A project budget is allocated. A team is formed. Success is defined narrowly. What is missing is acknowledgment that AI is an operating capability, not a feature.

Three structural mismatches often show up. Consider a typical enterprise scenario. A business unit requests funding for an AI initiative to improve customer retention. Finance approves a capital budget for model development and initial deployment. The project is scoped, staffed, and launched. Months later, the model is live and performing well.

Then reality sets in. Ongoing model monitoring requires dedicated resources. Data pipelines need continuous validation. Regulatory requirements demand periodic reviews (e.g., quarterly). Performance tuning becomes a permanent operational need. These costs were never part of the original business case. They fall into an undefined space between IT operations and business ownership.

The question of who pays for what, and from which budget line, remains unresolved. Work continues because stopping would waste prior investment. Costs are absorbed informally. Accountability fragments.

First, project funding is used where program funding is required. Second, capital and operating expenses are blended without clear ownership. Third, tools are funded without funding the work required to keep them effective over time.

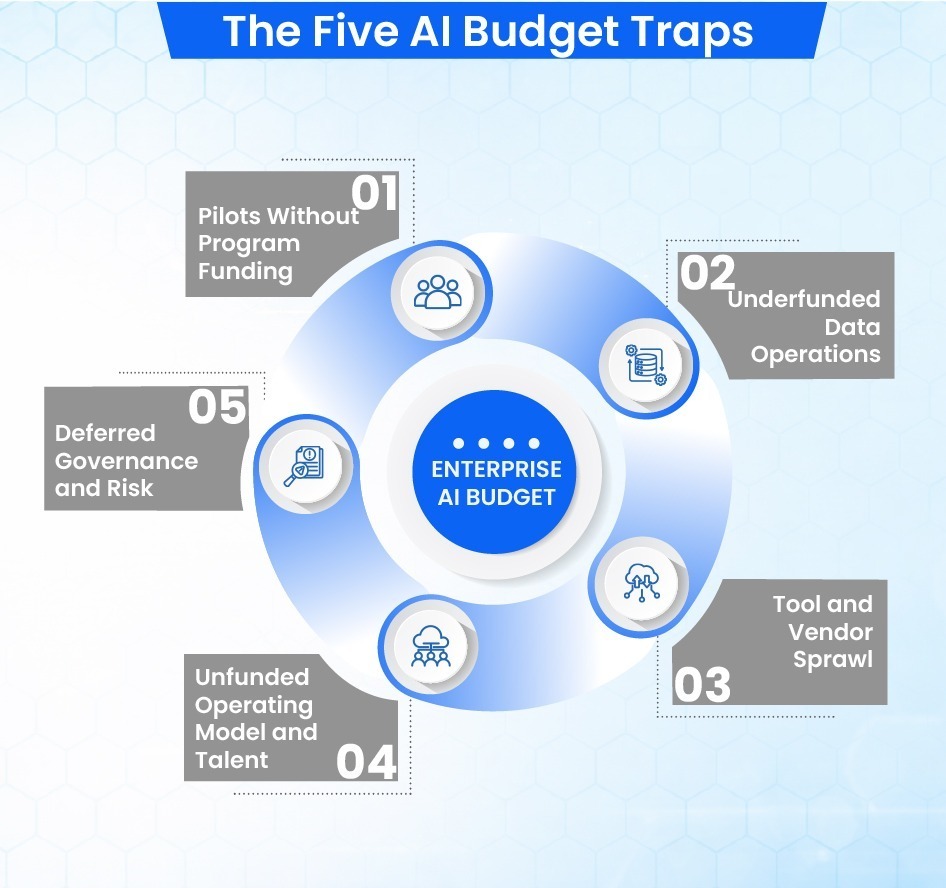

These mismatches surface through five recurring budget traps.

Trap 1. Funding Pilots Without Funding the Program

The most common AI budget failure starts with a pilot that works.

A team proves that a model can reduce call handling time. Another shows predictive maintenance accuracy. A third automates document classification. Each pilot is funded cheaply and celebrated as a win.

What is missing is a funded path to scale.

In one large enterprise, over 30 AI pilots were approved across different business units in a single year. Fewer than five ever reached production. The rest stalled when teams realized that integration, security review, data pipelines, and operational support were unfunded. The pilots did not fail technically. They failed financially.

The budget trap is approving pilots without explicitly funding what happens next. Scale costs more than experimentation. It always has.

What Experienced Teams Do Instead

Mature organizations separate exploration from commitment. They fund pilots with explicit exit criteria and pre-approved scale assumptions. If those assumptions cannot be funded, the pilot is treated as learning, not progress.

Trap 2. Underestimating Data Readiness and Data Operations Costs

Data work is often one of the most consistently underestimated costs in enterprise AI.

Executives often assume data preparation is a one-time effort. Clean the data. Train the model.

Move on. In practice, data operations are a recurring operational expense.

One organization deployed a forecasting model that performed well in testing but degraded within months. The root cause was not the model. Upstream data sources changed format.

Manual fixes crept in. Labels became inconsistent. Model accuracy declined, triggering emergency rework.

None of this was budgeted.

The hidden cost is not the initial data cleanup. It is ongoing data validation, labeling, lineage tracking, access controls, and governance. These costs compound quietly and are often absorbed informally until they become visible as delivery delays.

What Experienced Teams Do Instead

They treat data as a managed product. Budgets explicitly include recurring data operations. Model performance expectations are tied directly to sustained data investment.

Trap 3. Tool Proliferation and Vendor Sprawl

AI tool sprawl rarely starts as a problem. It starts as speed.

Teams buy tools to move fast. This includes cloud platforms, model APIs, experimentation environments, governance add-ons, and monitoring tools. Each purchase makes sense locally. Collectively, they create a fragmented portfolio that is expensive to operate and harder to unwind.

In one enterprise review I saw, over 20 AI-related platforms were in use across six business units. Licensing costs overlapped. Capabilities duplicated. No single team owned usage optimization. Some tools were idle but still fully licensed.

The budget trap is allowing AI tooling to scale faster than organizational absorption capacity.

What Experienced Teams Do Instead

They manage AI tooling as a portfolio, not a shopping list. Platform ownership is centralized. Usage is tracked. Contracts are aligned to actual value delivery rather than experimentation convenience.

Trap 4. Ignoring Operating Model and Talent Costs

Many AI initiatives are staffed as side projects. A few data scientists. Some borrowed engineers. Occasional legal or risk review. This works until the system needs to run reliably.

In one case, a production AI system depended on a single senior engineer who understood its data pipeline. When that person left, model performance issues went unresolved for weeks. There was no funded role for ongoing model operations or risk oversight.

The trap is assuming AI can be built without being operated.

What Experienced Teams Do Instead

They define an AI operating model early. Roles for build, run, and govern are explicitly funded. External vendors are used deliberately, not as a substitute for ownership.

Trap 5. Treating Governance and Risk as Overhead

Governance is often funded last, if at all.

Early AI work proceeds informally. Controls are light. Documentation is minimal. When regulatory or audit scrutiny arrives, teams scramble. Models must be revalidated. Data sources must be justified. Decisions must be explained retroactively.

One enterprise paused multiple AI deployments after a compliance review uncovered insufficient model documentation. Months of rework followed. Budgets doubled. The timelines slipped.

The cost was not governance itself. The cost was deferring it.

What Experienced Teams Do Instead

They embed governance from day one. Budget is allocated for risk management, documentation, monitoring, and review as part of delivery. Governance is treated as value protection, not friction.

The Compounding Effect of Budget Traps

These traps rarely appear alone.

Unfunded pilots lead to tool sprawl. Tool sprawl increases operating complexity. Weak operating models amplify data issues. Deferred governance magnifies rework costs.

Consider the trajectory of one enterprise AI initiative. A successful customer service pilot was approved for scale without dedicated data operations funding. The team purchased a monitoring platform to compensate, adding to tool sprawl. As model performance declined due to data quality issues, the organization brought in external consultants.

Consultants identified missing governance controls. Regulatory review followed. The initiative paused for four months of remediation.

Total budget impact exceeded three times the original scale estimate. Timeline slipped by eight months. Executive confidence eroded. The underlying model was sound. The financial and operational planning was not.

By the time leaders intervene, the narrative becomes one of cost containment rather than value creation. AI becomes perceived as expensive and unpredictable, even when the underlying technology is sound.

Most overruns are not surprises. They are the result of predictable patterns that were visible early but unaddressed.

A Practical Budgeting Lens for Enterprise AI

First, they budget across the lifecycle, not just delivery. This means forecasting costs for model monitoring, retraining, data operations, and governance as part of the initial business case. Portfolio-level value tracking replaces single-project ROI calculations. Leaders evaluate AI spend against sustained business outcomes, not just deployment milestones.

Second, they fund accountability, not activity. Roles are defined and resourced for ongoing ownership. Someone is explicitly responsible for model performance, data quality, risk management, and operational continuity. The budget follows an accountability structure.

Third, they align financial controls with execution maturity. Early-stage initiatives operate under different funding rules than production systems. Funding gates are tied to readiness signals like defined operating models, proven data pipelines, and documented governance. Finance, technology, and risk leaders share ownership of these gates rather than operating in separate approval tracks.

This approach requires closer collaboration across functions. It surfaces cost realities earlier. It makes trade-offs explicit rather than implicit.

The question is not whether AI costs money. It always will. The question is whether spend is deliberate, transparent, and aligned to outcomes.

Implications for Program Managers and Executives

Leaders approving AI budgets should ask different questions.

What happens if this pilot succeeds? Who owns scale? What recurring costs are implied? What data dependencies exist? Who is accountable for ongoing performance and risk?

Organizations that can answer these questions upfront are less likely to experience runaway AI spend. Those that cannot are more likely to.

Good answers are specific. They name owners. They quantify recurring costs. They map dependencies. They define funding sources for each phase of the lifecycle. When a team cannot provide these answers, the right decision is often to defer approval until clarity exists.

Disciplined budgeting is not about spending less. It is about avoiding predictable waste and protecting credibility.

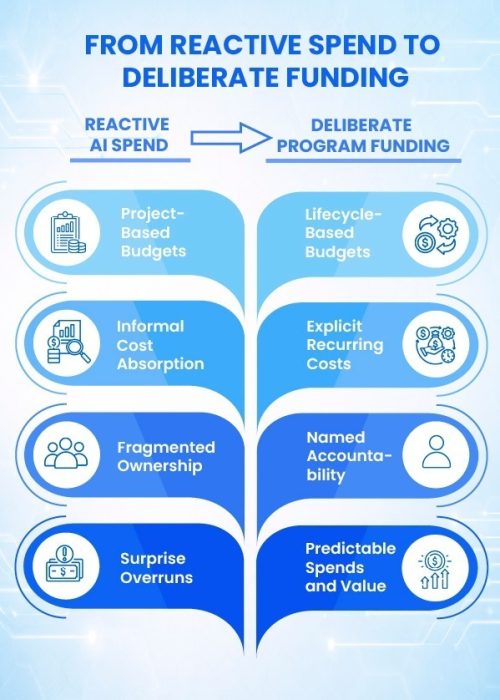

Structured approaches to AI program management, such as those reflected in programs like EC-Council’s Certified AI Program Manager (CAIPM), are designed to help address these budget traps systematically. They emphasize lifecycle thinking, accountability models, and integrating financial planning with risk management and governance from the start. Organizations adopting these practices shift from reactive budget management to deliberate program funding.

The difference is not theoretical. It shows up in delivery confidence, cost predictability, and sustained value creation.

Looking Forward

Enterprise AI budgets reveal organizational readiness more clearly than any maturity assessment. Teams that fund AI as a program build durable capability. Teams that fund it as a collection of projects accumulate debt.

The difference shows up not just in financials, but in trust, delivery confidence, and long-term value creation.

About the Author