Bias, Model Drift, Hallucination: Mapping AI Risks to Governance Controls

- Responsible AI Governance

As artificial intelligence (AI) becomes more deeply embedded in business operations, managing AI risks has become just as important as achieving performance or innovation. Organizations are no longer experimenting with AI in isolation. AI systems now influence hiring decisions, customer interactions, financial forecasts, security monitoring, and operational workflows. When AI fails, the impact is no longer theoretical. It affects people, revenue, trust, and regulatory exposure.

Among the many risks associated with AI, three consistently emerge as the most critical and widely misunderstood: bias, model drift, and hallucination. Each risk manifests differently, creates different forms of harm, and requires distinct governance controls. Treating them as a single category of “AI risk” often leads to weak or ineffective oversight.

Effective governance starts by understanding how each risk arises, then mapping that risk to specific controls, accountability structures, and monitoring practices. When risks are clearly mapped to governance actions, AI programs become more predictable, auditable, and aligned with organizational standards.

Understanding Why AI Risks Require Structured Governance

When AI systems fail, the consequences extend beyond technical issues and begin to affect people, revenue, trust, and regulatory exposure. Unlike traditional software, AI systems do not behave in entirely predictable ways. Conventional systems typically produce the same output for the same input unless explicitly changed. AI systems, however, rely on data-driven patterns and probabilistic reasoning. They evolve as data changes and as real-world conditions shift. This dynamic nature makes AI powerful, but it also introduces new risks that must be managed deliberately.

AI risks often emerge gradually rather than as a single failure event. A model may perform well during testing but degrade quietly over time. A system may appear accurate overall but produce unfair or incorrect results for specific groups. Generative AI systems may produce responses that sound confident yet contain factual inaccuracies. Without structured oversight, these issues can persist unnoticed until they cause measurable harm.

Structured governance provides the framework needed to identify, monitor, and respond to these risks early. Governance is not intended to restrict innovation or introduce excessive administration complexity. It is about creating guardrails that allow AI systems to scale responsibly and predictably. Clear governance ensures that risks are visible, ownership is defined, and responses are timely.

Program managers play a central role in this process. Positioned between business objectives, technical teams, and operational accountability, they are responsible for ensuring that AI risks are understood and addressed in practical ways. By establishing structured governance early, organizations create a foundation that supports ethical use, operational stability, and long-term trust in AI systems.

Bias: Identifying and Governing Systemic AI Risks

Bias is one of the most frequently discussed risks in AI yet it is also one of the most misunderstood. In most cases, bias does not arise from malicious intent or poor engineering. It originates from the data used to train AI systems. Historical data often reflects existing imbalances, incomplete representation, or embedded assumptions. When models learn from this data, they can reproduce or amplify those patterns, leading to unfair or inaccurate outcomes.

Bias becomes especially concerning when AI systems are used in decision-making processes that affect people directly. Hiring recommendations, credit evaluations, customer prioritization, and risk scoring are all areas where biased outcomes can have legal, ethical, and reputational consequences. Even subtle disparities in error rates across different user groups can undermine trust and expose organizations to regulatory scrutiny.

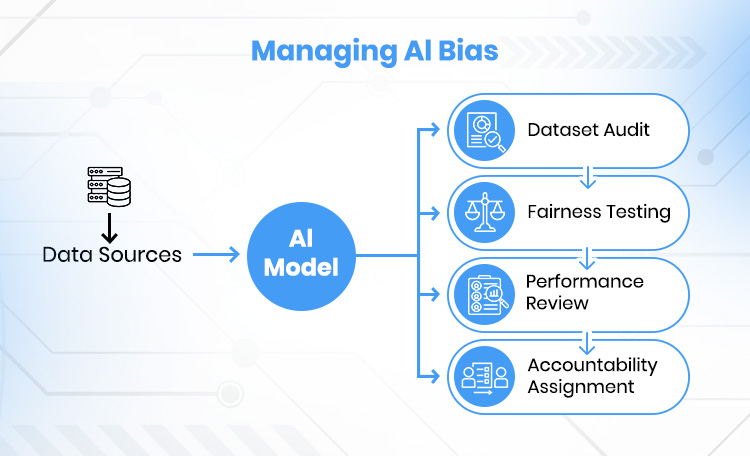

Governance controls for bias must begin with strong data oversight. Organizations should establish structured data reviews to assess where training data comes from, how it was collected, and whether it adequately represents the populations the AI system will affect. These reviews should not be limited to initial development. As data sources evolve, governance teams must reassess data quality and representation regularly.

Validation practices are another critical control. Models should be tested against diverse validation datasets that reflect real-world usage rather than relying solely on average performance metrics. Bias-related performance indicators should be documented and reviewed as part of model approval and deployment decisions.

Clear accountability is essential. Bias governance fails when responsibility is diffuse or undefined. Program managers help ensure that ownership for ethical outcomes is assigned across data teams, business owners, and risk or compliance functions. Regular audits and review checkpoints reinforce accountability and help ensure that models continue to operate as intended during usage expansion.

Model Drift: Managing Performance Degradation over Time

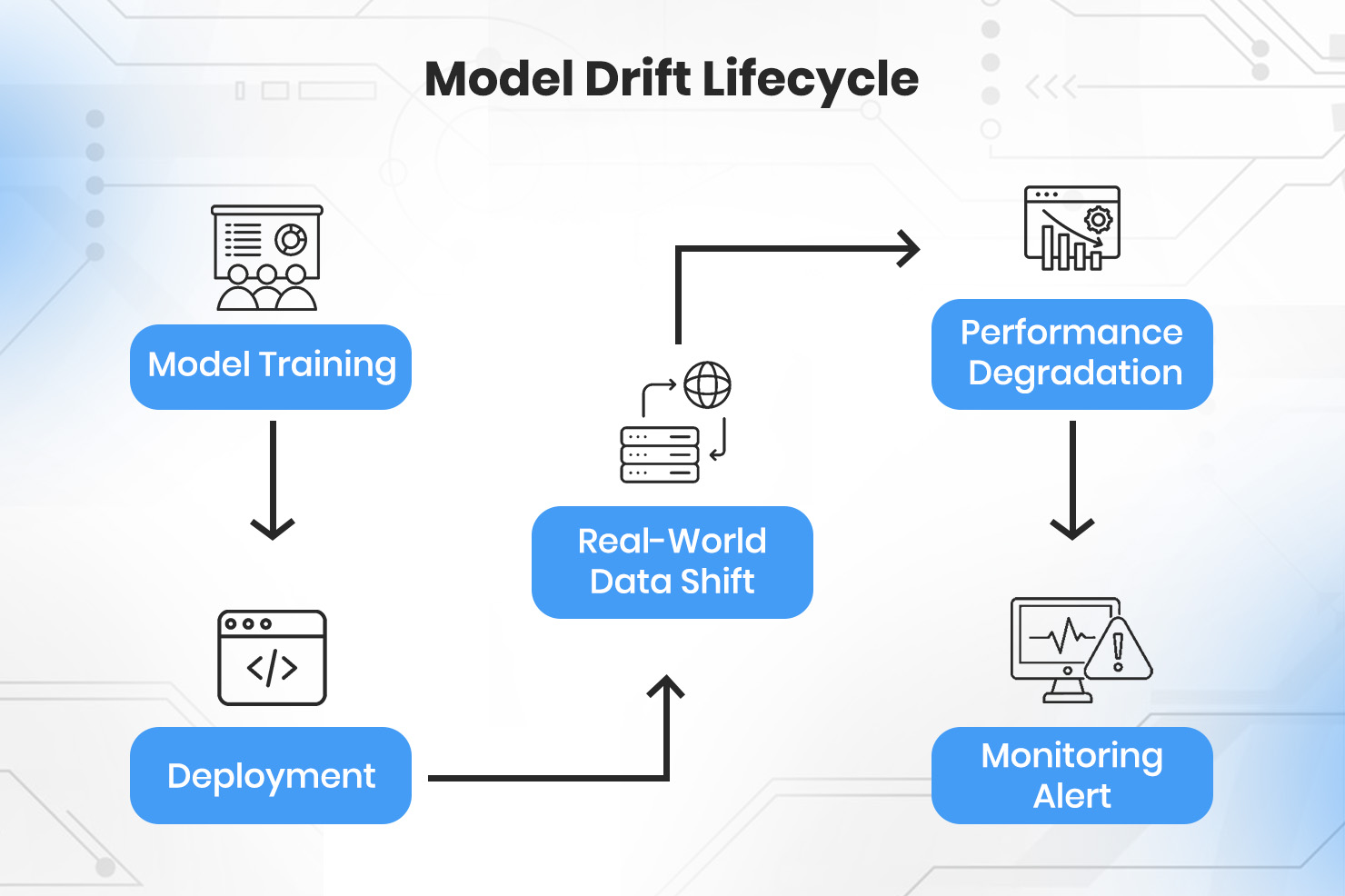

Model drift is one of the most common and least visible risks of AI systems. Model drift describes the performance degradation that occurs when a model’s production environment deviates significantly from its initial training dataset. These changes can happen gradually or suddenly. Customer behavior may evolve, market conditions may shift, or upstream systems may introduce new data formats or distributions. When this happens, model performance can decline even though the system appears to be functioning normally.

What makes drift especially dangerous is that it rarely causes immediate or obvious failures. Unlike traditional software bugs, drift does not usually trigger errors or alerts by default. Instead, predictions become less accurate over time, decisions grow less reliable, and business outcomes suffer quietly. Without deliberate governance controls, organizations may not realize a model has degraded until meaningful damage has already occurred.

Effective governance for drift begins with continuous monitoring. Organizations should define clear performance metrics and thresholds that indicate when a model is operating outside acceptable limits. These metrics should include both technical indicators, such as accuracy or confidence distributions, and business-level outcomes, such as conversion rates, error costs, or service quality. Monitoring should be automated where possible and reviewed regularly.

Scheduled model reviews provide an additional layer of control. Rather than waiting for performance issues to surface, governance frameworks should require periodic evaluations of model behavior, data inputs, and underlying assumptions. These reviews create structured opportunities to retrain, recalibrate, or retire models before drift becomes harmful.

Program managers play a critical role in drift governance. They ensure that monitoring responsibilities are clearly assigned and that alerts result in action rather than being ignored. Drift governance fails when no one is accountable for responding despite signals. Clear documentation, defined escalation paths, and ownership of remediation decisions help keep AI systems reliable as conditions change.

Hallucinations: Governing Generative AI Behavior

Hallucinations are most associated with generative AI systems. A hallucination occurs when an AI system produces output that appears confident, coherent, and authoritative but is factually incorrect, misleading, or entirely fabricated. Unlike bias or model drift, hallucination is not always tied to data quality or changing patterns. Instead, this risk emerges from how generative models construct responses based on probability rather than verified truth.

This risk becomes particularly serious in domains where accuracy and reliability are critical. In areas such as healthcare, finance, legal analysis, cybersecurity, or internal decision support, hallucinated outputs can lead to incorrect conclusions, poor decisions, or loss of trust. The confident tone often associated with generative systems can make these errors harder to detect, especially for non-expert users.

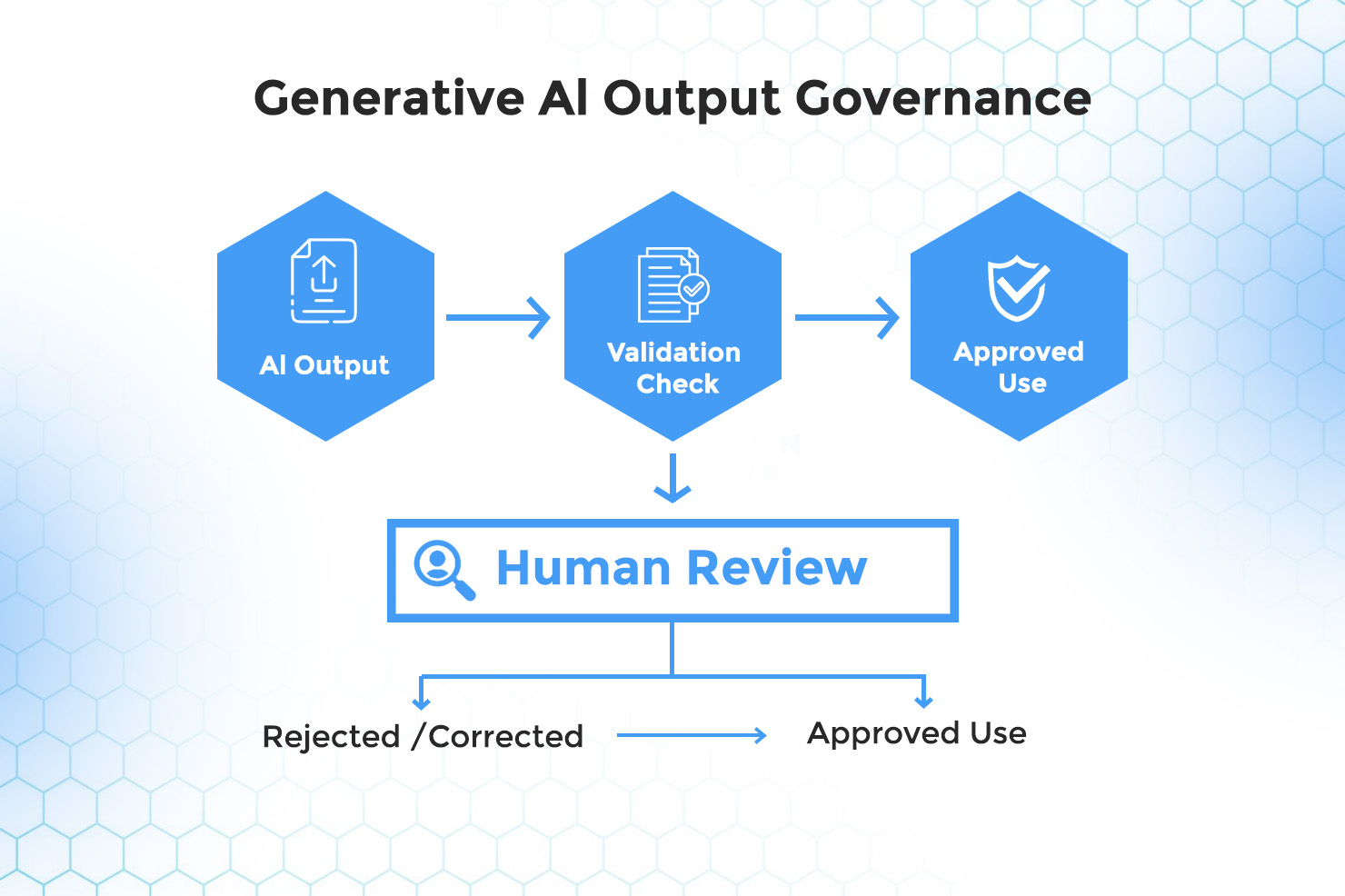

Governance controls for hallucinations focus on boundaries, validation, and oversight. One of the most effective controls is defining clear usage boundaries. Organizations should specify where generative AI can be used safely and where it must be restricted or supplemented with human review. Not every task is appropriate for fully automated generation.

Human review processes play a central role in mitigating hallucination risks. Outputs that influence decisions, customer communication, or external reporting should be reviewed by qualified individuals, particularly during early deployment phases. Over time, review requirements can be adjusted based on observed performance and risk tolerance.

Output validation mechanisms provide additional protection. These may include requiring source references, implementing confidence indicators, or designing prompts that encourage the system to acknowledge uncertainty rather than inventing answers. Governance teams should also ensure that users are educated about the limitations of generative AI and understand that confident language does not guarantee correctness.

Program managers are responsible for ensuring these controls are consistently applied. By embedding hallucination governance into workflows and expectations, organizations reduce misuse and preserve trust in AI-assisted systems.

Mapping AI Risks to Governance for Sustainable AI Programs

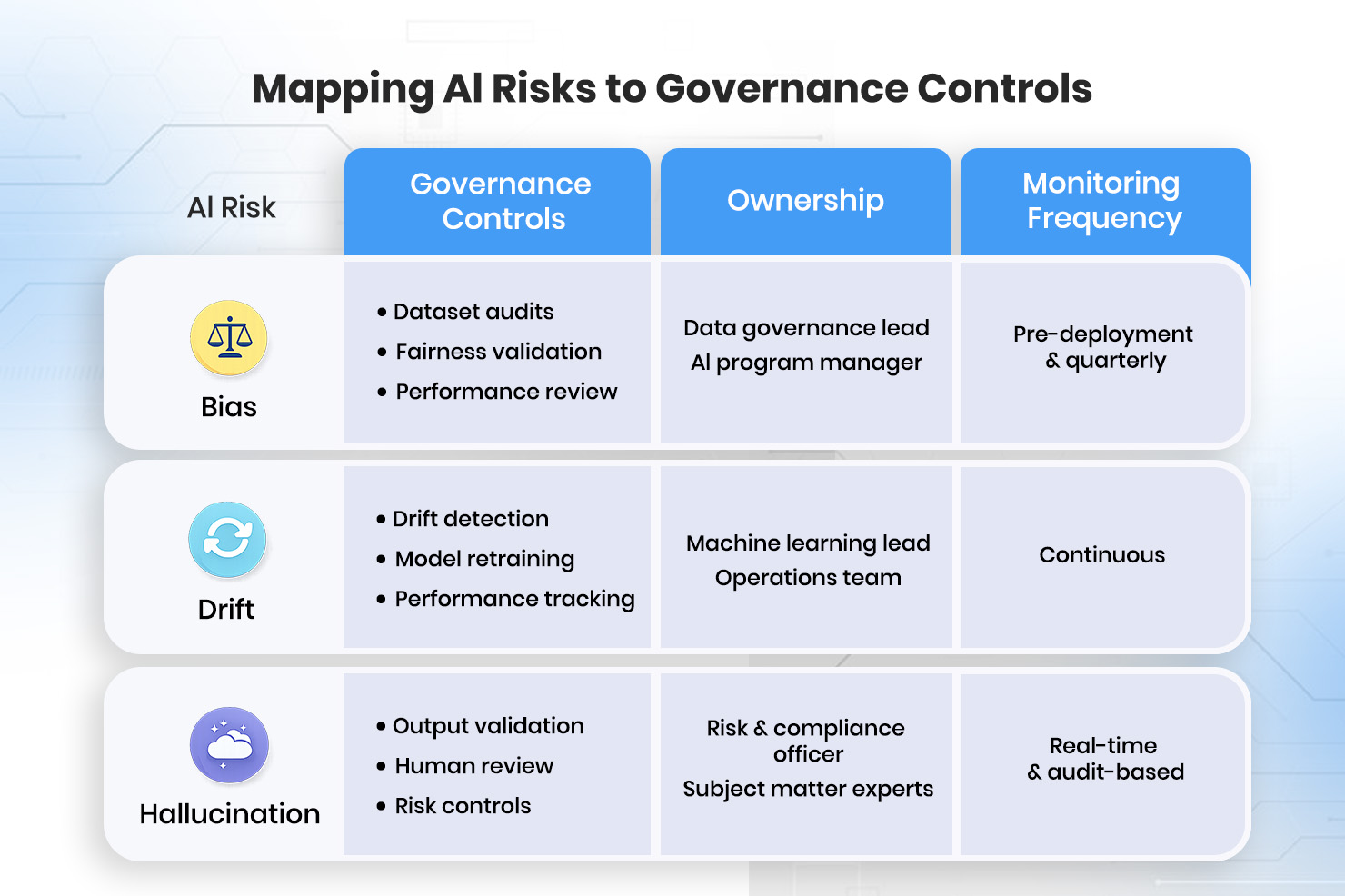

Bias, model drift, and hallucination represent different types of AI risks, but they share a common requirement: intentional governance. Treating AI risk as a single, abstract concern often results in vague controls and unclear accountability. Sustainable AI programs succeed when each specific risk is mapped to defined governance actions, ownership, and monitoring processes.

Effective governance begins by recognizing that different risks require different controls. Bias is best managed through data governance, validation practices, and ethical accountability. Model drift requires continuous monitoring, performance thresholds, and scheduled reviews. Hallucination demands usage boundaries, output validation, and human oversight. When these controls are applied consistently, AI systems become more predictable and easier to manage.

Program managers play a central role in operationalizing this mapping. They translate risk concepts into practical workflows and ensure governance is embedded into delivery rather than added as an afterthought. This includes defining who monitors which risks, how issues are escalated, and how remediation decisions are made. Without this clarity, even well-designed controls fail in practice.

Mapping risks to governance also strengthens trust. Leadership gains confidence when AI behavior is transparent and managed, regulators see evidence of responsible oversight, and users understand how and when AI outputs should be relied upon. This trust is essential for scaling AI beyond pilot projects into core business processes.

Effective governance acts as a strategic accelerator rather than a bottleneck. By establishing clear guardrails up front, organizations shift their focus from reactive crisis management to proactive value creation. Program managers can focus on optimization and innovation instead of constant firefighting.

Ultimately, sustainable AI programs are built on visibility, accountability, and adaptability. By mapping bias, drift, and hallucination risks to specific governance controls, organizations create AI systems that are robust, dependable, ethical, and supportive of long-term business objectives.

About the Author

Imran Afzal

Imran Afzal, CEO of UTCLI Solutions and a best-selling IT instructor, has trained over a million students worldwide in IT, systems administration, and career development. An educator, mentor, and entrepreneur, he brings over 25 years of experience in systems engineering, leadership, and training across Fortune 500 companies in finance, fashion, and tech media.

His IT journey began in 2001 at Time Warner, NYC, and has since included leading major projects like data center migrations, VMware deployments, monitoring tool implementations, and Amazon cloud migrations. Imran holds a degree in Computer Information Systems from Baruch College (CUNY) and an MBA from NYIT.

Certified in Linux System Administration, VMware, UNIX, and Windows Server, Imran has been training students since 2010 through top-rated online courses and on-site programs. His mentorship has helped thousands secure IT jobs.

Beyond IT, Imran is dedicated to education and community service, founding a nonprofit school for children (pre-K to 10th grade).