Board-Level Metrics for Measuring AI Accountability

- Brian C. Newman

- Responsible AI Governance

Boards are being asked to oversee artificial intelligence (AI) without the signals they need to do it well.

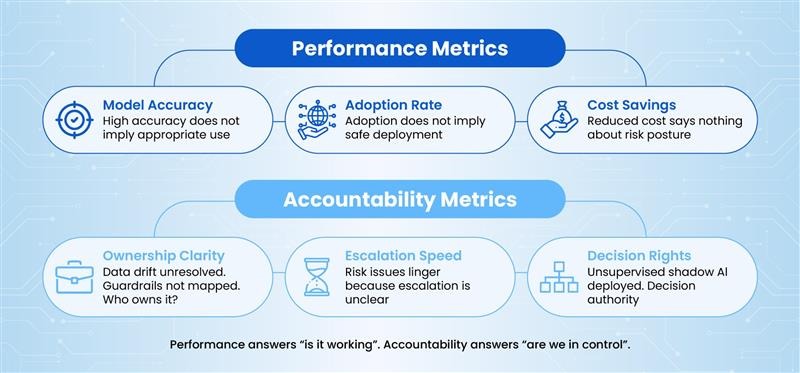

Most AI reporting still focuses on performance factors, including accuracy, adoption, and cost savings. These metrics matter operationally, but they do not answer the questions boards are responsible for answering. That includes who owns the risk, who makes decisions when things go wrong, how fast issues surface, and whether AI initiatives remain aligned to approved intent.

This is an accountability problem, not a technology problem.

This article explains what board-level AI accountability metrics look like, why traditional IT and digital metrics fail, and how boards can use a small number of well-chosen indicators to improve oversight without slowing execution.

The Boards' Real AI Problem Is Accountability, Not Performance

Boards do not manage models. They govern risk, capital, and reputation.

When AI failures occur, the root cause is rarely a poorly tuned algorithm. It is unclear ownership, diffused decision rights, delayed escalation, or governance that exists on paper but not in practice.

Performance metrics tell boards whether systems are working. Accountability metrics tell boards whether the organization is in control.

Without accountability signals, boards are forced into reactive oversight. They learn about AI issues after harm occurs. At that point, intervention is expensive, and credibility is damaged.

When AI projects stall, it is almost never because the underlying models cannot be tuned. It is because the organization cannot clearly say who owns which decisions, what guardrails apply, and when to escalate. According to HRbrain’s summary of an MIT report, about 5% of enterprise generative AI initiatives scale successfully, with misaligned goals, unclear ownership, and outdated workflows cited as primary reasons for failure rather than model performance (HRbrain, 2026).

Effective AI governance starts with measurements that reflect how accountability actually functions inside the enterprise. This means going beyond traditional performance metrics and incorporating indicators that reveal whether the right ownership structures, decision rights, and escalation paths are in place.

By focusing on how accountability is established, assigned, and executed across AI initiatives, organizations can provide boards with the insights they need to ensure that AI systems are not only performing well but are also being managed responsibly and ethically.

What AI Accountability Means in a Board Context

Accountability is often confused with responsibility or control. They are not the same.

Responsibility refers to the duties and tasks that must be performed. The responsible party is the person or team that executes them. Control refers to mechanisms that constrain behavior. Accountability refers to the accountable party who owns outcomes and consequences.

For boards, AI accountability is at the program level. It is not limited to the model or tool level.

Boards need to know whether AI initiatives have clear owners who can explain intent, risk posture, and trade-offs. They need confidence that decisions are made deliberately and escalated appropriately.

AI accountability sits squarely within fiduciary duty. It affects regulatory exposure, customer trust, and long-term value creation.

Why Traditional IT and Digital Metrics Break Down for AI

Many organizations reuse IT governance metrics for AI oversight. This creates blind spots.

Project delivery metrics focus on scope, schedule, and budget. AI risk often materializes after deployment, when models interact with real users, evolving data, and operational constraints.

Model performance metrics are narrow. High accuracy does not imply appropriate use. It does not indicate whether bias, misuse, or unintended consequences are being managed.

Quarterly reporting cycles are also misaligned. AI risks emerge continuously. Waiting for lagging indicators defeats the purpose of oversight.

Understanding why existing metrics fail clarifies what replacement metrics must do differently. Boards need metrics that reflect lifecycle accountability, not delivery milestones.

Principles for Board Appropriate AI Metrics

Not every AI metric belongs in the boardroom. If you want effective board-level metrics, they need four characteristics.

First, they answer board questions, not technical ones.

Second, they focus on ownership, escalation, and decision latency. These are leading indicators of failure.

Third, they are consistent across business units and use cases. Boards cannot oversee bespoke dashboards.

Fourth, they trigger action. A metric that cannot change behavior is noise.

Boards should favor a small, stable set of indicators over exhaustive reporting.

Core Categories of Board-Level AI Accountability Metrics

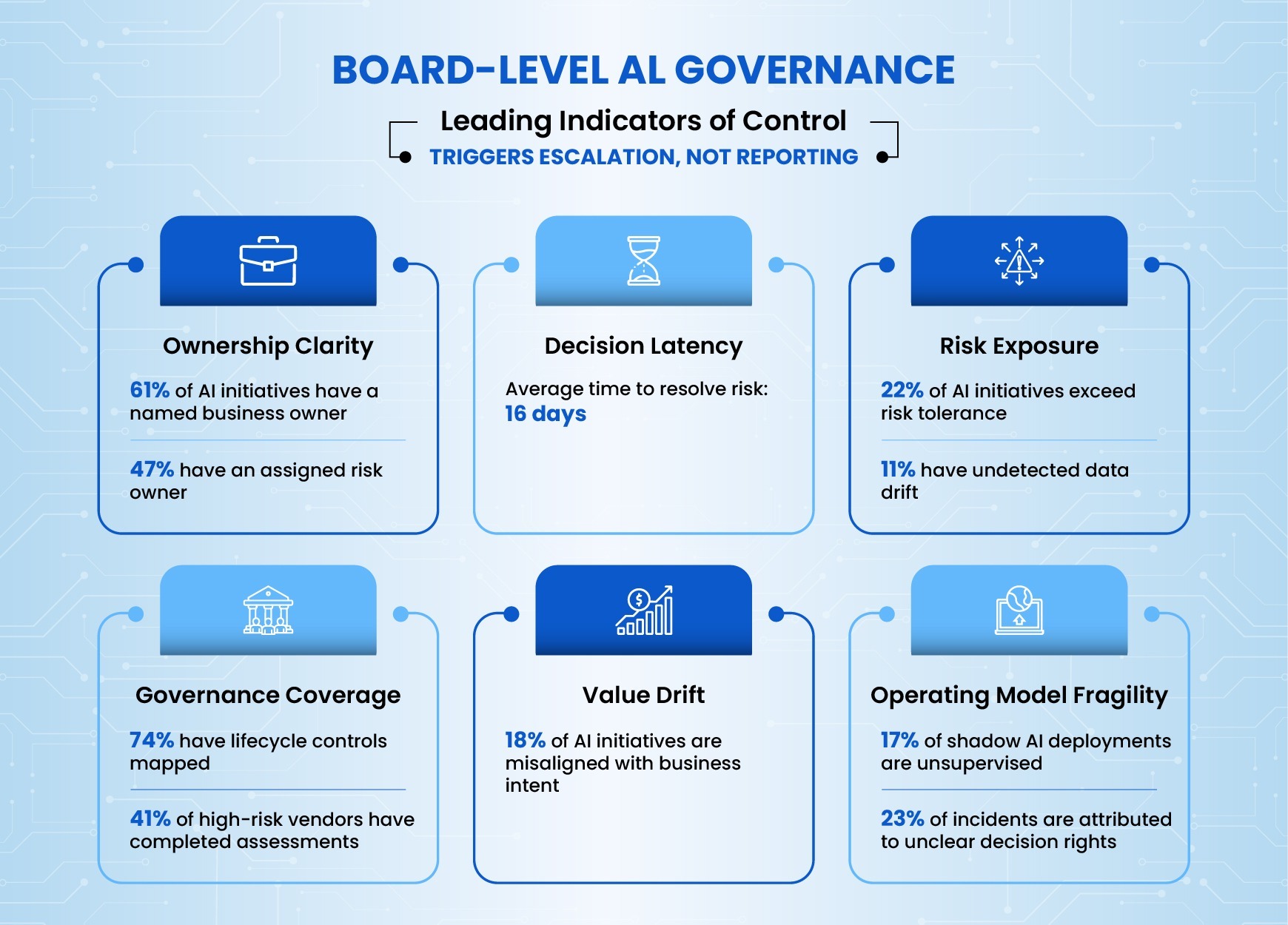

AI accountability metrics cluster naturally into a few categories. Together, they provide a coherent view of control without requiring micromanagement.

Ownership and Decision Rights Metrics

These metrics answer a basic question: Does anyone truly own this AI initiative?

Examples include the percentage of AI initiatives with a named business owner. That person should be accountable for outcomes and empowered to halt or redirect the initiative, not merely a sponsor focused on delivery timelines.

Other examples include the clarity of escalation paths for AI-related incidents and the average time to decision for material AI risk issues.

Persistent gaps here are a warning sign. When ownership is unclear, issues linger. Decisions stall. Accountability diffuses.

Boards should expect ownership clarity early, not after deployment.

Governance Coverage Metrics

Governance coverage metrics focus on whether AI initiatives are operating within defined guardrails.

Useful indicators include the proportion of AI initiatives mapped to governance controls across the lifecycle. This is not just policy acknowledgment. It includes the number of governance exceptions granted, particularly exceptions that bypass risk review or extend pilot timelines indefinitely, and how many exceptions are reviewed versus silently accepted.

Another important signal is governance debt. Governance debt accumulates when an AI initiative launches with the understanding that data lineage documentation will be completed later, then operates for six months without that documentation being addressed. It accumulates when model validation procedures are deferred to allow faster deployment, creating technical debt in the governance layer itself.

Boards should track how many initiatives carry this governance debt, what specific controls have been deferred, and for how long these gaps persist. Initiatives that continue operating while governance gaps accumulate represent growing risk exposure.

Boards should be more concerned about silent exceptions than visible ones.

Risk Exposure and Control Effectiveness Metrics

These metrics track how AI risk is being managed in practice.

Examples include the distribution of AI risks that are accepted, mitigated, or deferred. These metrics also incorporate the concentration of risk across vendors, platforms, or data sources, and the frequency and severity of AI-related incidents.

Near-miss reporting is especially valuable. Near misses reveal weak controls before harm occurs.

Boards should treat declining near-miss reporting as a risk signal, not a success indicator.

Declining reports often mean issues are no longer surfacing, not that controls have improved. A healthy AI program maintains steady or increasing near-miss visibility as teams develop stronger risk awareness.

Value Realization and Value Drift Metrics

AI initiatives often drift from their original value intent.

Metrics in this category include alignment between approved value cases and realized outcomes.

This means comparing the business outcomes boards approved during funding against actual results measured at defined intervals.

For example, if a customer service AI was approved based on reducing call handle time by 20%, value alignment tracking verifies whether that reduction materialized and whether the model continues delivering it over time. It also means looking at the rate of scope expansion without re-approval and the number of initiatives continuing without validated value hypotheses.

Boards should ask a hard question: When do we stop?

Sunsetting underperforming AI programs is a sign of maturity, not failure.

Operating Model Health Metrics

These metrics surface structural weaknesses that affect AI accountability.

Examples include dependency concentration on key individuals or vendors. Dependency concentration appears when a single vendor relationship supports 70% of production AI workloads, or when three individuals hold all the expertise required to troubleshoot model failures across multiple critical systems. This creates fragility in accountability because ownership cannot transfer and risk cannot be distributed. There are handoff failure rates across teams and a number of AI initiatives operating outside defined delivery models.

When AI programs succeed only because of heroics, accountability is fragile.

Boards should look for resilience, not brilliance.

Metrics Boards Should Avoid

Some metrics create false confidence.

Pure model performance metrics are at the management level. Tool usage metrics are often vanity metrics. Vendor-provided dashboards rarely reflect enterprise accountability.

Boards should also avoid metrics that cannot be acted upon. If leadership cannot change behavior in response, the metric does not belong in board reporting.

Simplicity improves oversight.

How Boards Should Review AI Accountability Metrics

Metrics are only useful if they are reviewed correctly.

Boards should integrate AI accountability reporting into existing risk and audit committee structures. AI should not be treated as a separate novelty topic.

Reporting cadence should match risk velocity. High-impact AI initiatives may require more frequent review.

Boards also need clarity on what constitutes a material AI issue. This should be defined in advance, not debated during a crisis.

When thresholds are crossed, escalation should be automatic.

Management Implications of Board-Level AI Metrics

Metrics shape behavior.

When accountability is measured, executives make clearer decisions. Ownership becomes explicit. Trade-offs are documented. Risk conversations happen earlier.

There is a risk of metric gaming. Boards should look for sudden improvements without corresponding operational evidence.

Transparency generally improves discipline. Even when metrics reveal uncomfortable truths.

AI programs benefit from sunlight.

How CAIPM and CRAGE Inform Accountability Measurement

Two programs help create a shared accountability language across enterprises.

EC-Council’s Certified AI Program Manager (CAIPM) program emphasizes lifecycle ownership, decision framing, and measurable accountability. It trains leaders to think in terms of programs, not pilots.

The Certified Responsible AI Governance & Ethics (CRAGE) program reinforces risk management, ethical considerations, and oversight alignment. It provides structure for evaluating impact and control effectiveness.

When leaders across business, technology, and risk share these frameworks, accountability metrics become easier to define and harder to ignore.

Boards benefit when management speaks a common language.

Closing Perspective: From AI Oversight to AI Confidence

Boards do not need more data. They need better signals.

As AI becomes embedded across core operations, accountability will matter more than performance. Organizations that measure it well will move faster with confidence.

Those that do not will continue to learn the hard way.

Reference

About the Author