AI Security: Safeguarding the Future of AI

- Offensive AI Security

As AI becomes more integrated into critical industries, ensuring its security is paramount for protecting users and organizations alike. Securing AI systems helps ensure that data remains confidential and systems remain available. It also supports system integrity while maintaining compliance with applicable privacy regulations. This involves safeguarding AI data, models, and algorithms from malicious attacks and protecting against vulnerabilities that could lead to breaches or system manipulation. These measures include continuous monitoring, regular audits, and implementing robust encryption and secure coding practices.

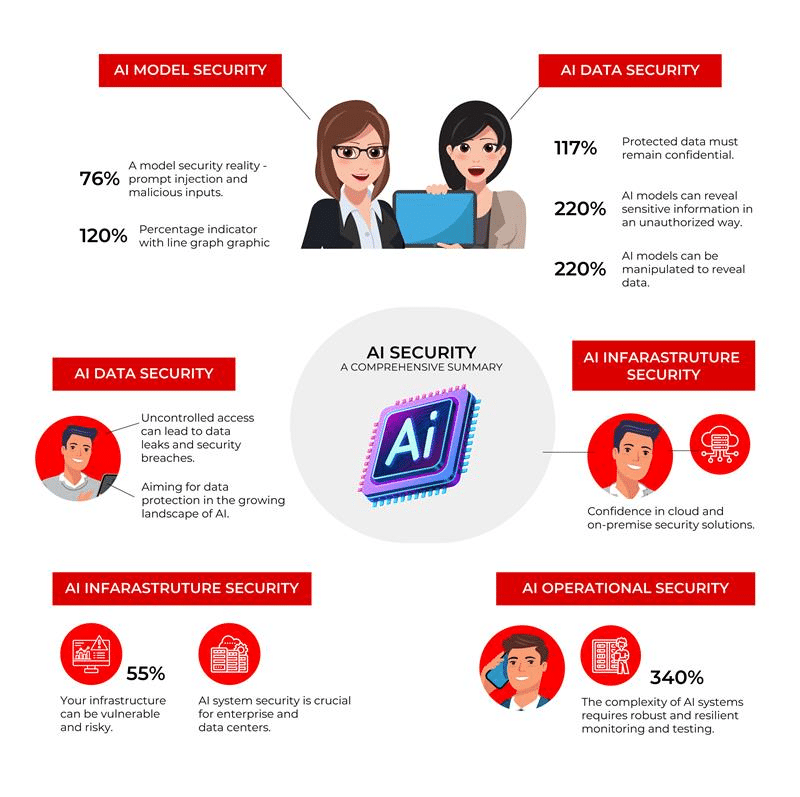

Key Aspects of AI Security

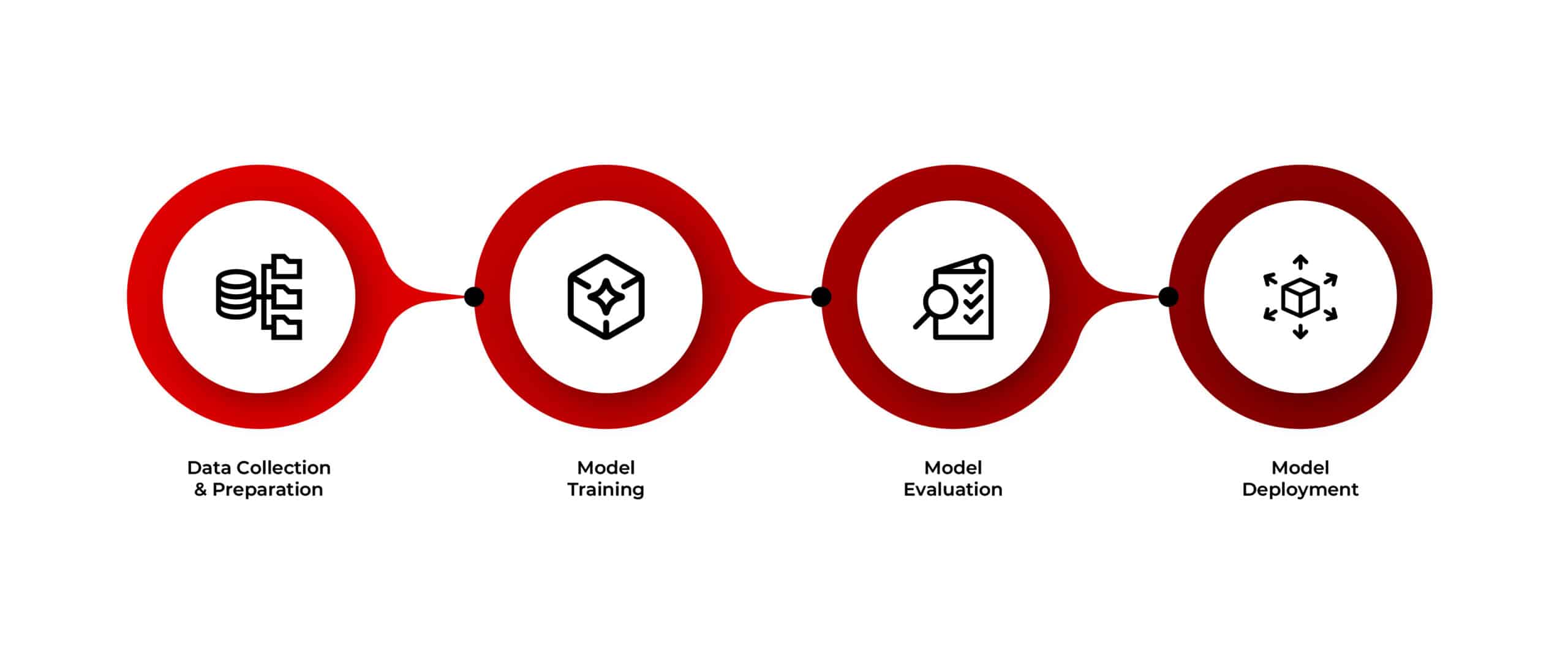

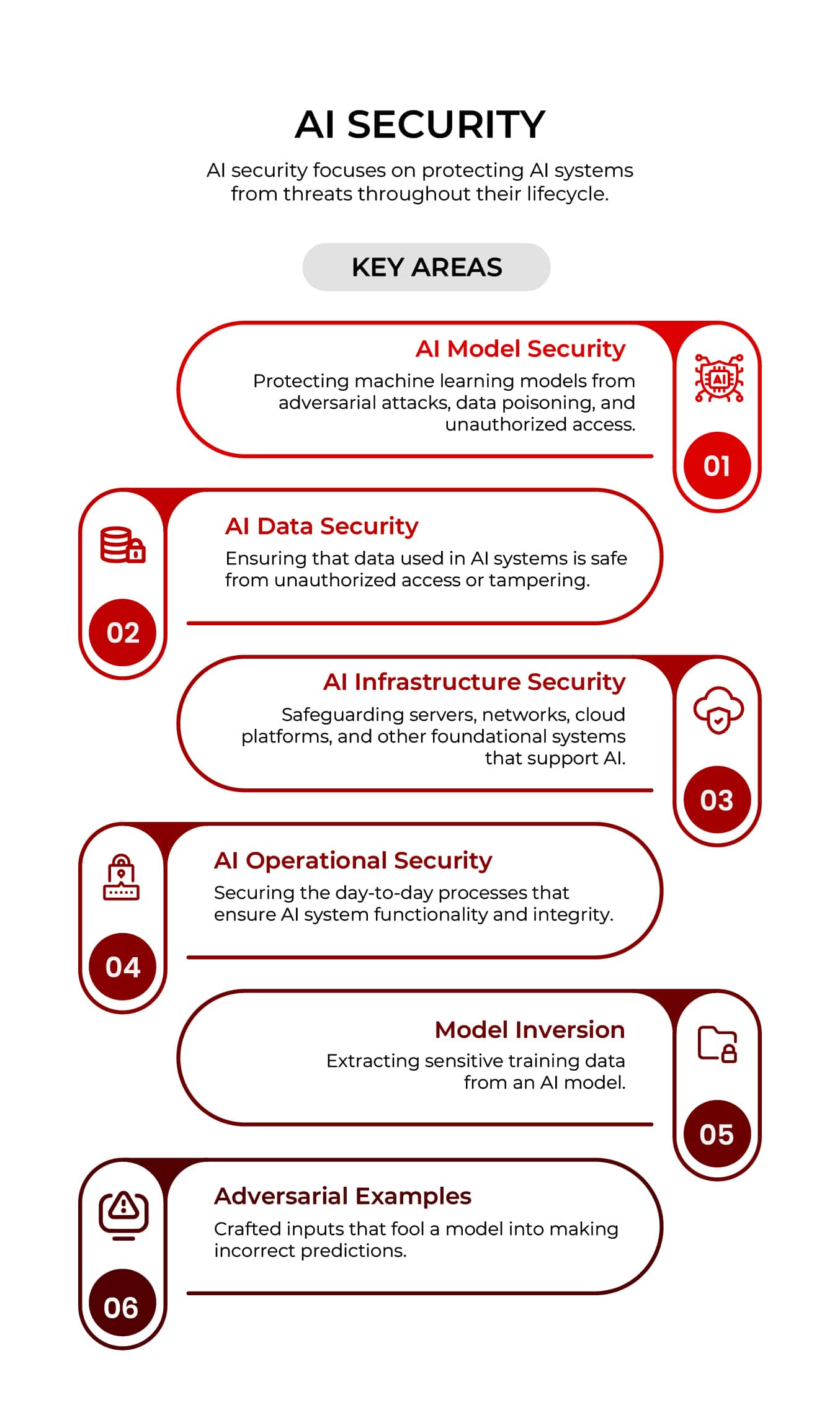

AI security refers to the technologies, frameworks, and practices put in place to protect AI systems from threats throughout their lifecycle. It addresses risks in data sourcing, model training and evaluation, deployment, and ongoing monitoring. Securing each phase with strong controls, audits, and governance is vital for maintaining trust, integrity, and resilience in AI systems throughout their operational lifecycle. AI security spans the following key areas:

- AI Models: Machine learning models can be manipulated or stolen through adversarial attacks, data poisoning, and unauthorized access. Ensuring the integrity of training data is critical to avoid biased or flawed models. Best practices to secure models against vulnerabilities include robust training, encryption, access controls, anomaly detection, continuous monitoring, and vulnerability assessments. These ensure reliable, ethical, and trustworthy AI performance in real-world applications.

- AI Data Collection and Preparation: AI data can be misused through unauthorized access, breaches, or tampering. Data security can be enhanced through strong encryption, secure storage, and strict access controls. Implementing strict data validation and provenance tracking helps maintain data quality and trustworthiness. Effective data security also helps maintain privacy, compliance with regulations such as the General Data Protection Regulation (GDPR), and the integrity and reliability of AI models and their outputs.

- AI Model Evaluation Process: Evaluating AI models involves assessing their vulnerabilities, biases, and robustness against adversarial attacks. Ensuring the evaluation process is secure helps prevent exploitation or manipulation of the model’s performance. Techniques like stress testing, fairness audits, and privacy checks are crucial for identifying potential flaws. This ensures the model’s reliability and ethical compliance before deployment.

- Security in AI Deployment: Security during deployment is critical to ensure that AI systems operate safely and securely in real-world environments. It involves safeguarding against threats such as model inversion, adversarial attacks, and unauthorized access to the deployed model. Securing APIs, implementing strong access controls, and regularly monitoring system performance for anomalies are key strategies. Continuous updates and secure deployment pipelines help mitigate risks, ensuring that AI systems remain resilient and trustworthy throughout their lifecycle.

- AI Infrastructure: The foundational systems that support AI include servers, networks, cloud platforms, and data pipelines. AI infrastructure security ensures that these components are safeguarded from cyberthreats, unauthorized access, and system failures. Key measures include encryption, access controls, monitoring, and incident response. Strong infrastructure security is vital for reliable, secure, and scalable AI operations.

- AI Operations: AI operational security involves protecting the day-to-day processes that support AI system functionality from unauthorized changes, insider threats, and operational disruptions. This includes securing development environments, managing access controls, monitoring system performance, and detecting anomalies. Strong operational security ensures the reliability, integrity, and resilience of AI systems throughout their lifecycle and deployment.

Why AI Security Matters

AI technologies are increasingly being integrated into high-risk domains, such as healthcare, finance, autonomous vehicles, etc. Compromised models or data can harm organizations and clients. Strong AI security ensures that AI systems are reliable, ethical, and compliant with regulations, mitigating risks such as bias, adversarial attacks, and privacy violations to maintain trust and safety.

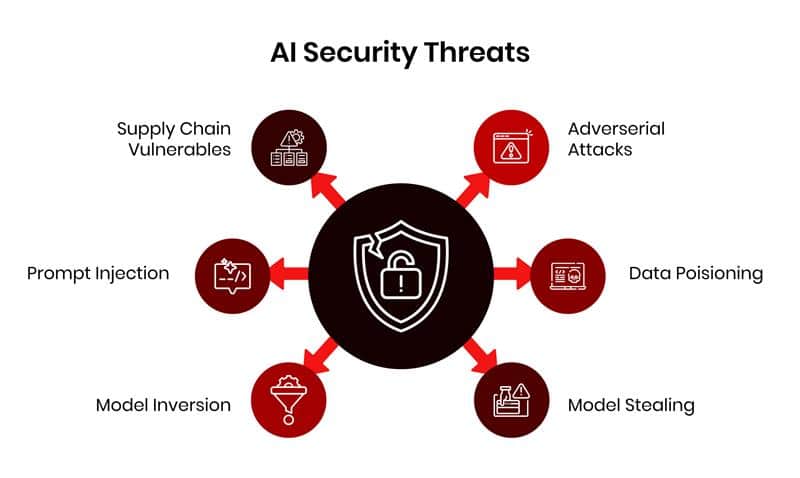

Types of Threats to AI Systems

AI systems face an expanding range of threats as both the technology and the threat landscape continue to evolve. The following are common categories of threats to AI systems and their impacts.

- AI Adversarial Attacks: These attacks involve manipulating input data to deceive machine learning models, often without detection. These subtle alterations can cause models to misclassify images, text, or other inputs, posing security risks in applications like facial recognition or autonomous driving. Recognizing and countering adversarial attacks improves the resilience and trustworthiness of AI systems after deployment.

- AI Data Poisoning: In this type of attack, malicious data is injected into a model’s training dataset to corrupt its learning process. This can lead to incorrect outputs or vulnerabilities during deployment. Data poisoning poses significant risks to AI integrity, especially in systems that rely on crowdsourced or open-source data for training and continuous learning.

- AI Model Stealing: This is an attack where adversaries copy proprietary machine learning models by querying them and then analyzing their responses. Model stealing compromises intellectual property, bypasses costly training, and can expose sensitive data. This poses a threat to the confidentiality and competitive edge of AI systems, making it essential to implement defenses such as query rate limiting and output obfuscation.

- AI Model Inversion: In AI model inversion, adversaries exploit model outputs to reconstruct sensitive input data, such as personal information or images. By analyzing predictions, attackers can infer attributes or recreate training data. This poses privacy risks, especially in models trained on confidential data. Safeguarding against inversion requires techniques like differential privacy and limiting access to model outputs.

- AI Prompt Injection (LLMs): AI prompt injection in large language models (LLMs) is a technique where attackers craft inputs that manipulate the model’s behavior, often triggering unintended actions. These malicious prompts may be embedded with hidden instructions to override system safety filters or extract sensitive data, posing serious security and ethical risks. Defense techniques like input sanitization, context isolation, user intent validation, and continuous testing can help ensure safe and reliable LLM interactions in the real world.

- AI Supply Chain Vulnerabilities: Vulnerabilities in the components used to build, train, and deploy AI systems, including data sets, models, and third-party tools, can be exploited. Threat techniques include data tampering, malicious code, and compromised dependencies. These risks can lead to model manipulation or failures, making supply chain security essential for maintaining AI integrity, trust, and performance across the development lifecycle.

The Future of AI Security

The future of AI security will see increasing complexity as more industries adopt AI systems. With the rise of AI-driven cyberattacks, defending against adversarial threats, data poisoning, and model manipulation will require more advanced techniques. Trends like explainable AI, AI-powered threat detection, and adaptive security models will become essential. Additionally, approaches such as federated learning and differential privacy can help safeguard sensitive data. Global regulatory frameworks will evolve to address ethical concerns, while collaboration between governments, tech companies, and researchers will help support the secure and responsible development of AI systems, mitigating risks while fostering innovation.

Conclusion

AI security is crucial for ensuring the safe and ethical operation of AI technologies. As AI systems grow in complexity and influence, addressing vulnerabilities such as adversarial attacks, data poisoning, and privacy breaches becomes increasingly important. A comprehensive approach involving secure data collection, robust model training, continuous evaluation, and deployment safeguards is necessary to protect AI systems from exploitation. Additionally, ongoing research, collaboration, and the development of regulatory frameworks will be key in addressing emerging threats and fostering trust. Prioritizing AI security will enable responsible use of AI, ensuring its benefits while minimizing risks to privacy, safety, and integrity.

About the Author

Ken Huang is a leading author and expert in AI applications and agentic AI security, serving as CEO and Chief AI Officer at DistributedApps.ai. He is Co-Chair of AI Safety groups at the Cloud Security Alliance and the OWASP AIVSS project, and Co-Chair of the AI STR Working Group at the World Digital Technology Academy. He is an EC Council instructor and Adjunct Professor at the University of San Francisco, teaching GenAI security and agentic AI security for data scientists, respectively. He coauthored OWASP’s Top 10 for LLM Applications and contributes to the NIST Generative AI Public Working Group. His books are published by Springer, Cambridge, Wiley, Packt, and China Machine Press, including Generative AI Security, Agentic AI Theories and Practices, Beyond AI, and Securing AI Agents. A frequent global speaker, he engages at major technology and policy forums.